Commonwealth Bank invested $900 million in fraud protections last financial year. It still ended up with a suspected $1 billion problem in its loan book.

The bank self-referred to police and ASIC after uncovering potentially doctored home loan applications — including documents generated by artificial intelligence. It followed an earlier alleged fraud at NAB, and all four major Australian banks have now flagged suspected fraudulent lending. CBA called it “an industry-wide challenge, with fraud being attempted through mortgage broking and referral channels.”

Most of the coverage has treated this as a lending story. A mortgage fraud problem. A credit risk issue.

It is all of those things. But from where I sit — reviewing customer due diligence and source of funds documentation every day — this is something bigger. If AI-generated documents are good enough to secure a home loan at Australia’s largest bank, they are good enough to pass the identity checks sitting on my desk. And probably yours.

This is not just a mortgage problem. It is a CDD problem. And that makes it an AML problem.

What is CDD, and why does it matter here

If you have ever been asked for your passport when opening a bank account, or asked to explain where your money came from when engaging a solicitor, you have been through customer due diligence.

CDD is the process businesses use to verify that a customer is who they say they are, understand what they do, and assess whether doing business with them creates a risk of money laundering or terrorism financing. In practice, it means collecting identity documents like passports and driver’s licences, checking those documents against databases, understanding the customer’s source of wealth and funds, assessing their risk level, and then continuing to monitor the relationship for anything unusual.

Every bank, financial institution, and — from July this year — every lawyer, accountant, and real estate agent providing certain services in Australia must perform CDD. It sits at the foundation of the entire AML/CTF compliance framework. Without it, there is no reliable way to distinguish a legitimate customer from someone using the financial system to clean dirty money.

The entire system depends on one assumption: that the documents a customer provides are genuine. AI is breaking that assumption at scale.

What AI can generate now

This is not the document fraud of five years ago. We are past the era of clumsy Photoshop edits, mismatched fonts, and poorly aligned logos. Generative AI tools can now produce near-perfect reproductions of payslips, bank statements, tax returns, identity documents, company records, and financial statements.

According to Sumsub’s 2025-2026 Identity Fraud Report, 2% of all detected fake documents in the first half of 2025 were created using generative AI tools like ChatGPT, Grok, and Gemini — and the trend is accelerating. Inscribe’s 2026 State of Document Fraud Report found that 1 in 16 documents now shows signs of fraud, with AI-generated and template-based fraud increasing fivefold between April and December 2025. The Institute for Financial Integrity projects AI-generated fraud losses will grow from $12 billion in 2023 to $40 billion by 2027.

The cost of entry has collapsed. Research from Sumsub found that a convincing fake identity document can be generated for as little as $15 in under 30 minutes using freely available tools. That is not organised crime infrastructure. That is a laptop and half an hour.

What makes AI forgeries fundamentally different from traditional fakes is not just quality — it is scale. A single person with the right tools can generate thousands of variations at near-zero cost. The documents replicate authentic formatting, logos, transaction patterns, and data structures with a precision that passes visual inspection, basic OCR extraction, and in many cases, automated verification systems that were designed for a pre-AI threat environment.

Why your verification probably does not catch it

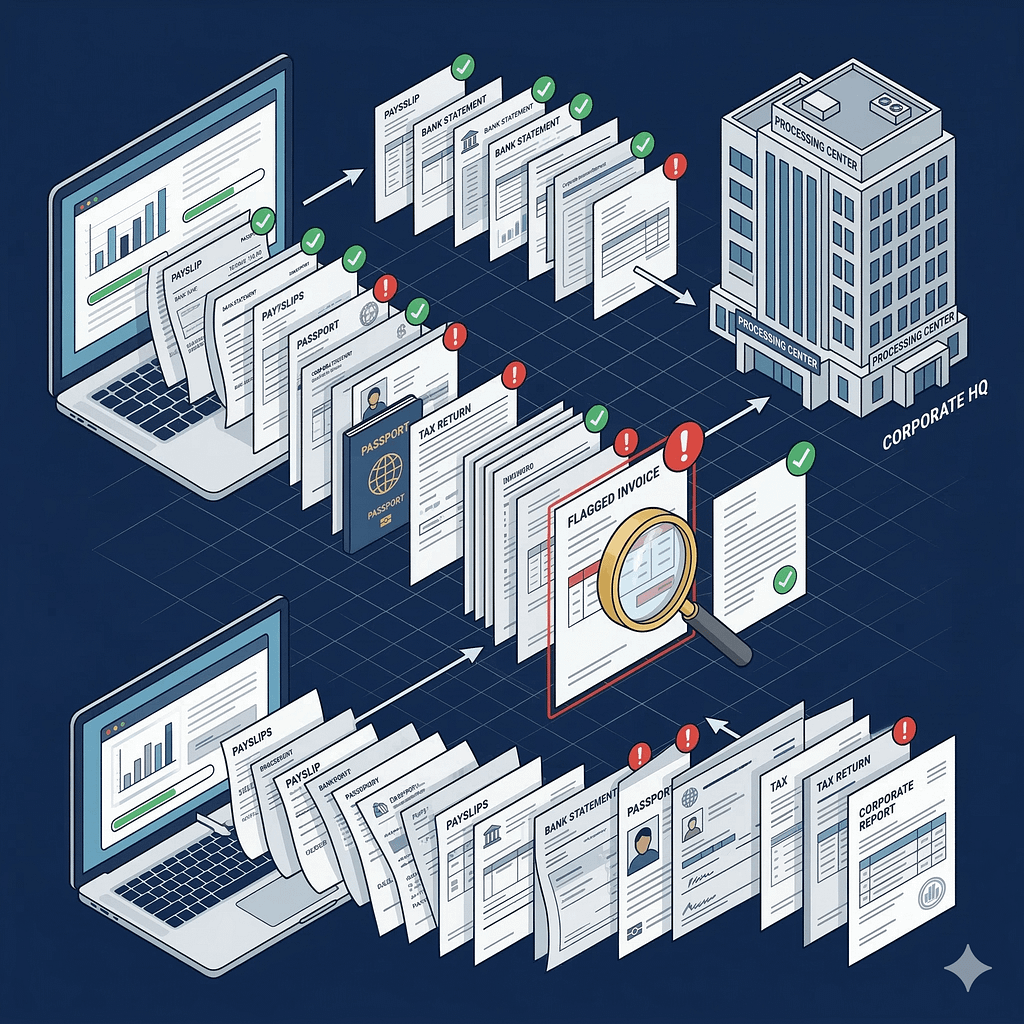

Most CDD verification processes in use today were built for human-quality forgeries. They rely on visual inspection of document images, text extraction via OCR, database lookups to cross-reference identity details, and — for higher-risk customers — manual review by an analyst.

Each of these layers has a specific weakness against AI-generated documents. OCR reads the forgery as truth — it extracts text, it does not assess authenticity. Visual inspection cannot catch formatting that was learned from thousands of genuine samples. Database lookups confirm the real data embedded in a synthetic identity, because that identity was partly built from genuine stolen information. And manual review, even by experienced analysts, struggles when the document looks indistinguishable from the real thing.

The broker channel compounds this. UBS estimates that mortgage brokers now originate around 80% of new Australian home loans. That is a model optimised for speed and volume, with intermediaries submitting documents on behalf of customers — creating distance between the bank’s verification systems and the person behind the application. CBA’s own spokesperson described fraud entering through exactly this channel.

But this vulnerability is not unique to mortgage lending. Any business model that relies on intermediaries to collect documents and pass them upstream has the same structural exposure. That includes financial advisers, accountants lodging documents on behalf of clients, and real estate agents onboarding buyers — many of whom are about to become AML/CTF reporting entities for the first time.

Why this is an AML problem, not just a fraud problem

Here is where the conversation needs to shift.

A synthetic identity is a fabricated persona built by combining real and fake information — for example, a genuine stolen driver’s licence number paired with a fabricated name and address. Unlike traditional identity theft, where someone steals an existing person’s identity, synthetic identity fraud creates an entirely new person who does not exist. These identities can open bank accounts, secure loans, purchase property, and move money through the financial system — all while appearing legitimate to CDD processes.

This is not just a consumer protection issue. Synthetic identity fraud is explicitly recognised by FATF, FinCEN, the US Federal Reserve, and ACAMS as a money laundering and terrorism financing enabler. It is used for bust-out loan schemes, to funnel funds through mule networks, to obscure beneficial ownership for sanctions evasion, and to integrate criminal proceeds into the real economy through property purchases.

The property connection is critical. AUSTRAC CEO Brendan Thomas, in a speech delivered just days ago at a cross-border money flows conference, described what he called a “highly integrated financial crime system” in the Asia-Pacific — one that links industrial-scale scam operations, crypto channels, and traditional asset-based integration, “particularly property.”

Thomas was blunt about the trajectory: “Digital crime does not stay digital. It almost always ends in the real economy.” He described funds originating in scams and cyber-enabled fraud moving rapidly through digital channels, being laundered across multiple jurisdictions, and entering property markets “far removed from the original crime.” By the time those funds reach the point of integration, he said, “their criminal origin may be extremely difficult to detect.”

Now connect that to the CBA story. The logic chain is simple:

AI-generated documents secure fraudulent loans. Fraudulent loans purchase property. Property is sold or refinanced. Proceeds come out clean.

That is a textbook money laundering integration typology. And it is exactly what AUSTRAC’s reformed ongoing CDD obligations are designed to catch.

What the reformed framework expects — and where AI creates gaps

Australia’s reformed AML/CTF Act takes effect for existing reporting entities on 31 March 2026. One of the most significant changes is the separation of CDD into two distinct obligations: initial CDD under Section 28, and ongoing CDD under Section 30.

Initial CDD — verifying the customer’s identity before providing services — has a three-year transitional period. Ongoing CDD does not. It is a Day 1 obligation from 31 March, with no grace period.

Ongoing CDD requires you to monitor customers for unusual transactions, review and update their risk profiles, and reverify their identity when triggers are met. The reformed framework is explicitly outcomes-focused — meaning AUSTRAC will assess whether your controls actually identified and mitigated ML/TF risk, not just whether you had a process that looked good on paper.

Here is the problem AI document fraud creates for this framework.

If the identity was fraudulent from the start — if a synthetic identity passed your initial CDD — then your ongoing monitoring is monitoring a fiction. The customer risk profile was built on fabricated documents. Unusual activity may not appear unusual because the baseline was never real. And when a trigger requires you to reverify the customer’s identity, reverification against the same compromised sources produces the same result.

The reformed framework’s emphasis on outcomes means this gap has consequences. If your verification was beaten by AI-generated documents, the outcome failed — regardless of how robust your process appeared.

What this looks like on an analyst’s desk

For anyone doing CDD, enhanced due diligence, or source of wealth reviews, AI document fraud changes the calculus on what counts as sufficient verification.

A payslip alone is no longer enough to verify employment income. A bank statement alone is no longer enough to verify transaction history. A tax return alone is no longer enough to confirm source of wealth — particularly draft returns submitted through accountants, which have appeared in recent Australian fraud cases.

Single-source document verification, as a category, is weaker than it was a year ago. The practical implication is that multi-source verification — cross-referencing documents against independent data points, checking for internal consistency across a customer’s profile, and treating any single document as one input rather than proof — becomes essential rather than optional.

Jamieson O’Reilly, a white hat hacker and founder of security firm Dvuln, noted in his comments on the CBA fraud that AI-generated documents carry forensic fingerprints even when metadata is clean: “Sentence structure uniformity, statistical regularities in word choice, formatting consistency that’s slightly too clean.” These are subtle signals, but they are signals — and they suggest a new category of red flags for analysts reviewing documents.

Other indicators worth watching: documents that are aesthetically perfect with no natural variation. Concentration patterns — multiple applications from the same intermediary, geographic cluster, or document template. Newly established business entities with suspiciously complete trading histories. Overseas deposits used to meet verification thresholds. And customer profiles that lack the normal digital footprint you would expect — no property records, no employment history, no school records in databases.

Tranche 2: 80,000 new entities, zero compliance history

This is where the timing gets uncomfortable.

From 1 July 2026, AML/CTF obligations extend to an estimated 80,000 to 100,000 new reporting entities in what AUSTRAC calls “gatekeeper” professions: real estate agents, lawyers, accountants, conveyancers, and dealers in precious metals. These Tranche 2 entities will be required to enrol with AUSTRAC, develop AML/CTF programs, conduct customer due diligence, monitor client activity, and report suspicious matters.

These professions have no prior AML/CTF compliance history. Most have no compliance technology, no trained staff, and no experience identifying money laundering indicators. They are entering a regulatory environment where AI-generated documents are already more sophisticated than what many established financial institutions — with billion-dollar security budgets — can reliably detect.

The irony is hard to miss. Recent fraud cases in Australia have specifically exploited lawyers, accountants, and real estate agents as facilitators — using trust accounts to launder proceeds, submitting draft tax returns to inflate incomes, and inflating property valuations to justify fraudulent loan amounts. These are exactly the professions now being brought under regulation. And the tools criminals used to exploit them are getting better, not worse.

AUSTRAC CEO Thomas has been direct about expectations. He told newly regulated entities that AUSTRAC does not expect perfection on day one: “AML is a practice and we will be supporting and educating you on the types of ML risks in your sectors.” But he followed that with a clear warning: “If a business is wilfully ignoring the obligation to enrol, or we suspect a business is complicit with or wilfully blind to money laundering, they will be the focus of our enforcement efforts.”

For lawyers, accountants, and real estate agents reading this: CDD is not a box-ticking exercise. It requires you to actually verify that your client is who they say they are. AI-generated documents can now pass the kind of visual checks that most small professional firms rely on. If you accept documents at face value without reasonable verification, and those documents turn out to be fraudulent, the regulatory and legal exposure sits with you. AUSTRAC has released starter program kits specifically for small Tranche 2 businesses. Use them.

What AUSTRAC is saying about AI

Responding to the CBA fraud, AUSTRAC CEO Thomas told Information Age: “The rise of AI creates both risks and opportunities. While businesses can use it to strengthen their anti-money laundering programs, particularly as part of their transaction monitoring programs, criminals are also using AI to facilitate fraud and scams.”

He urged regulated businesses to review their systems regularly to ensure they are “robust and create a hostile environment for criminal activity.”

Separately, AUSTRAC deputy CEO Katie Miller has warned banks against flooding AUSTRAC with low-quality AI-generated suspicious activity reports — noting that some banks appear to be submitting high volumes simply to avoid penalties. AUSTRAC wants “higher quality but smaller amounts.”

The message from the regulator is consistent: AI is a double-edged sword. It can be used by criminals to generate fraudulent documents, and it can be used by compliance teams to generate meaningless paperwork. AUSTRAC wants neither. It wants substance.

What this means for you

If you work in financial crime, the CBA story is not someone else’s problem. The same AI tools generating fake payslips for mortgage applications today will generate fake source of wealth documents, fake business financials, and fake trust deeds tomorrow. Your CDD controls are only as strong as the weakest document they rely on.

If you work in a profession about to come under AML/CTF regulation — law, accounting, real estate — this is the threat environment you are walking into. The criminals who exploited your industry before regulation are not going to stop because AUSTRAC is now watching. They are going to get better at it.

And if you are in risk management, technology, or leadership at a financial institution: CBA spent $900 million and still got caught. That is not a failure of investment — it is a signal that the threat is evolving faster than the controls. The question is not whether AI document fraud will reach your desk. It is whether your systems will catch it when it does.

AUSTRAC’s reformed framework demands outcomes, not processes. In an environment where AI-generated documents can pass your CDD at the point of onboarding, the only defensible outcome is one built on multi-layered verification, cross-source validation, and a healthy suspicion of any document that looks too good to be true.

Because right now, it probably is.

Viktor Ha is a Senior Financial Crime Analyst based in Melbourne. He writes about AML enforcement, Australian regulatory developments, and financial crime career strategy at amlcams.info. Connect with him on LinkedIn.

Leave a Reply